In my previous post, I provided a walk through on how to create and debug Azure Functions with HTTP Triggers locally. In this post, let’s create Azure Function with Service Bus Triggers and understand how to accomplish the below things

-

- Creating Azure Functions with Service Bus trigger using VS Code

- Dockerizing the Azure Functions using Azure Functions Core Tools

- Deploying the Azure Functions to local kubernetes cluster

- Auto scaling the Azure Functions using KEDA

Prerequisites:

- Visual Studio Code

- Azure Tools Extension for VS Code

- Azure Functions Core Tools

- Docker Desktop for Windows/Mac

- Kubernetes (minikube or Docker Desktop based one)

- KEDA

- Azure Subscription

- Azure Service Bus under Subscription

1. Creating Azure Functions with Service Bus Trigger using VS Code

Follow through the below steps to create a new azure function project.

1.1 Choose language

1.2 Choose Framework

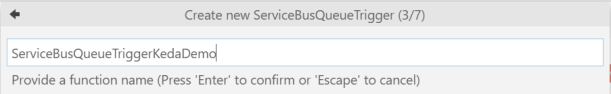

1.3 Choose Function Name

1.4 Choose Namespace

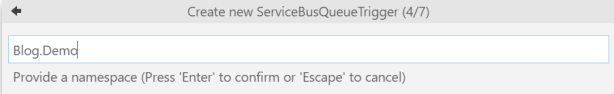

1.5 Choose LocalSettings

LocalSettings.json is where the Azure Functions project stores all the connection settings for Service Bus and Azure Web Jobs Storage (used for local debugging purposes)

1.6 Choose Azure Service Bus

If you had logged into Azure in your machine using az-cli then VS code will pull the subscription information using that account and start listing the service bus available within that subscription

1.7 Choose Queue within Azure Service Bus

Pressing Enter after selecting all of the above will create a new Azure functions project.

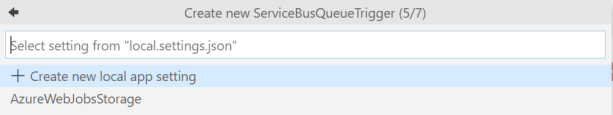

To test the service bus trigger, we can use Azure Service Bus Explorer and post messages to the Queue. Every time a new message is posted, we should see the azure functions getting triggered and reading the message from the queue. To run the functions locally, just go to Debug menu and click on the Play icon. As shown below, the message that was posted in Service Bus explorer was read by the Functions.

2. Dockerizing using Azure Functions Core Tools

Azure functions can be deployed directly to Azure using VS Code extension. But for this blog post, we will see how to containerize the azure functions, so that we can deploy to kubernetes. If you are wondering why to deploy to Kubernetes instead of directly deploying to Azure and rely on the Azure auto-scaling, check out the below reasons from keda documentation faq (https://keda.sh/faq/)

- Run functions on-premises (potentially in something like an ‘intelligent edge’ architecture)

- Run functions alongside other Kubernetes apps (maybe in a restricted network, app mesh, custom environment, etc.)

- Run functions outside of Azure (no vendor lock-in)

- Specific need for more control (GPU enabled compute clusters, policies, etc.)

2.1 Create a docker image

To dockerize the azure functions run the below command from the parent folder of the project

func init --docker-only

This would create the Dockerfile and .dockerignore file in to the project. The above command will automatically identify the runtime of the project and create the dockerfile appropriately. You should see below contents in the dockerfile

FROM mcr.microsoft.com/dotnet/core/sdk:3.0 AS installer-env COPY . /src/dotnet-function-app RUN cd /src/dotnet-function-app && \ mkdir -p /home/site/wwwroot && \ dotnet publish *.csproj --output /home/site/wwwroot # To enable ssh & remote debugging on app service change the base image to the one below # FROM mcr.microsoft.com/azure-functions/dotnet:3.0-appservice FROM mcr.microsoft.com/azure-functions/dotnet:3.0 ENV AzureWebJobsScriptRoot=/home/site/wwwroot \ AzureFunctionsJobHost__Logging__Console__IsEnabled=true COPY --from=installer-env ["/home/site/wwwroot", "/home/site/wwwroot"]

Build the image by running the below command

docker build -t servicebusqueuetriggerkedademo .

Once the command is successful, run docker images to check the latest image with the name `servicebusqueuetriggerkedademo`

2.2 Test the docker image

The environment variables needed by the azure function would be present in the localsettings.json file. In my file, I have 2 variables

- AzureWebJobsStorage

- swamisbdemo_SERVICBUS

While spinning up the docker container for the image we just built, we need to pass the values for these two variables. Values can be taken from `localsettings.json` file and passed to the below command

docker run -e AzureWebJobsStorage="Your Azure WebJobs Storage setting from localsettings.json" -e swamisbdemo_SERVICEBUS="Your service bus connection setting from localsettings.json" servicebusqueuetriggerkedademo:latest

You should see the azure function running in a docker container, check the container id by running docker ps and check the logs of the container by running docker logs

Using the Service Bus Explorer, if we post a message, we should see that the function running within docker container reads the message. Excerpt from logs

info: Function.ServiceBusQueueTriggerKedaDemo[0]

Executing 'ServiceBusQueueTriggerKedaDemo' (Reason='', Id=c040c8bf-a1e8-4dc1-9562-3599942b8f63)

info: Function.ServiceBusQueueTriggerKedaDemo[0]

Trigger Details: MessageId: 0b1d933c-ec90-40af-ba92-c55933b0d1b7, DeliveryCount: 1, EnqueuedTimeUtc: 03/15/2020 16:51:01, LockedUntilUtc: 03/15/2020 16:51:31, SessionId: (null)

info: Function.ServiceBusQueueTriggerKedaDemo.User[0]

C# ServiceBus queue trigger function processed message:

Hi Docker, how are you?

info: Function.ServiceBusQueueTriggerKedaDemo[0]

Executed 'ServiceBusQueueTriggerKedaDemo' (Succeeded, Id=c040c8bf-a1e8-4dc1-9562-3599942b8f63)

info: Function.ServiceBusQueueTriggerKedaDemo[0]

Executing 'ServiceBusQueueTriggerKedaDemo' (Reason='', Id=5191c8d4-197c-44e0-ab9d-5a538114611b)

info: Function.ServiceBusQueueTriggerKedaDemo[0]

Trigger Details: MessageId: f98ab6e6-32c7-4f26-bb0d-9ff871bac9d3, DeliveryCount: 1, EnqueuedTimeUtc: 03/15/2020 16:52:50, LockedUntilUtc: 03/15/2020 16:53:20, SessionId: (null)

info: Function.ServiceBusQueueTriggerKedaDemo.User[0]

C# ServiceBus queue trigger function processed message:

Hi Docker again, how are you?

info: Function.ServiceBusQueueTriggerKedaDemo[0]

Executed 'ServiceBusQueueTriggerKedaDemo' (Succeeded, Id=5191c8d4-197c-44e0-ab9d-5a538114611b)

3. Deploying to Kubernetes using Azure Functions Core Tools

Now that we have the image built for azure function, its time to deploy to kubernetes. For simplicity, I am using the kubernetes cluster that came up with Docker Desktop for Windows. Below commands should work with any kubernetes cluster

3.1 Push the image to Container registry

First we need to push the docker image to a container registry. In this case, I am going to push it to dockerhub by running the below commands

docker tag servicebusqueuetriggerkedademo:latest wannabeegeek/servicebusqueuetriggerkedademo:latest docker push wannabeegeek/servicebusqueuetriggerkedademo:latest

3.2 Create kubernetes deployment

For deploying to kubernetes secrets need to be created for the environment variables present in the localsettings.json file. This can be done in multiple ways. For simplicity, I will be letting the azure functions core tools do that trick for me. By default, it does a Base64 encoding of the variables and creates the secret. Run the below command to create a deployment

func kubernetes deploy --name "servicebusqueuetriggerkedademo" --image-name "wannabeegeek/servicebusqueuetriggerkedademo:latest" --dry-run > funcKedaDeploy.yml

--dry-run will create the necessary yml and the entire command output is written to the funcKedaDeploy.yml

4. Auto Scaling using KEDA

The yml file should have 3 different CRDs (Custom Resource Definition).

- Secret

- Deployment

- ScaledObject

Scaled Object is the type of CRD that’s used by KEDA to autoscale the pods. More details on the ScaledObject for Service Bus can be found at KEDA Documentation

Below is the ScaledObject definition that got created out of the above command :

apiVersion: keda.k8s.io/v1alpha1 kind: ScaledObject metadata: name: servicebusqueuetriggerkedademo namespace: default labels: deploymentName: servicebusqueuetriggerkedademo spec: scaleTargetRef: deploymentName: servicebusqueuetriggerkedademo triggers: - type: azure-servicebus metadata: type: serviceBusTrigger connection: swamisbdemo_SERVICEBUS queueName: myqueue name: myQueueItem

scaleTargetRef– This indicates the deployment that needs to be auto scaled up/downtriggers– specify the type of trigger used to scale the deployment, in this case, it isazure-servicebus

You might also notice under the Deployment CRD that there are no minimum replicas mentioned as the pods will be automatically scaled up/down using Horizontal Pod Autoscalers (HPA) in KEDA. Check out this document to understand How KEDA works .Before deploying the yml file we need to make a couple of changes to the ScaledObject

pollingInterval– this is the interval which is used by KEDA to poll the metrics server; In this case, check the queueLength; Default – 30 secondscooldownPeriod– this is the time to wait before scaling down the pods completely to 0; Default – 300 secondsqueuelength– this is the property used by KEDA to trigger the scaling. By default its “5”

Final ScaledObject CRD should look like the below one:

apiVersion: keda.k8s.io/v1alpha1 kind: ScaledObject metadata: name: servicebusqueuetriggerkedademo namespace: default labels: deploymentName: servicebusqueuetriggerkedademo spec: scaleTargetRef: deploymentName: servicebusqueuetriggerkedademo pollingInterval: 10 cooldownPeriod: 30 triggers: - type: azure-servicebus metadata: type: serviceBusTrigger connection: swamisbdemo_SERVICEBUS queueName: myqueue name: myQueueItem queueLength:"5"

Now its time to see KEDA in action. Deploy the entire yml file created in the step 3.2 by running the following command

kubectl apply -f funcKedaDeploy.yml secret/servicebusqueuetriggerkedademo created deployment.apps/servicebusqueuetriggerkedademo created scaledobject.keda.k8s.io/servicebusqueuetriggerkedademo created

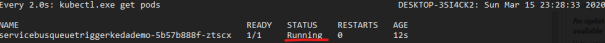

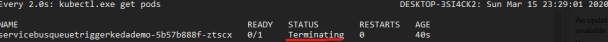

Once the deployment is successful, you can start pushing messages to the Service Bus queue and see that KEDA automatically scales Up & Down the pods depending upon the queue length. I have created a basic dotnet core console app to push the messages to service bus. Sample application’s Code base is available in github at: https://github.com/svswaminathan/ServiceBusMessageGenerator Update the connection string for your Service Bus and play around with KEDA by changing the threadCount and iterationCount

Sample screenshots below:

As you could see KEDA helps us in automatically scaling up/down the pods depending on various triggers. For more details on the architecture & concepts behind KEDA check out: https://keda.sh

Thats a wonderfull article ,do we have any section of enableing the MSI in kubernates with KEDA . our Azure functions are already MSI enabled

Thanks much Alex.. Though I haven’t tried yet with managed identity myself, as per the documentation, it’s possible to authenticate using either connection string or pod identity. Is this what u are looking for ? https://keda.sh/docs/scalers/azure-service-bus/#example

The problem am facing is the cmdlet “func kubernetes deploy” (dry run) should create 3 CRDs , instead the generated file has only the secret CRD get created, missing the Scaled Object and Deployment CRDs

i tried both approaches signed to docker hub / or ACR before executing this.

Any idea what am i missing here?

Sir,

Can you please confirm if we can change the default connection string parameter name “swamisbdemo_SERVICBUS” to a custom name? My docker image isn’t starting after build but my code is running successfully from local in ide. I have changed the original parameter name for service bus connection string.

Yes, that’s very much possible. Please ensure you use the modified Environment variable everywhere!